NVIDIA Rubin delay rattles AI industry with chip setback

A surprise delay in NVIDIA's next-gen Rubin AI chip architecture highlights the immense pressure and logistical challenges of the AI hardware race in 2024.

The vibration started around 3 p.m. Pacific Time. Not a physical tremor, but a digital one, rippling out from Santa Clara to every server farm, startup incubator, and hyperscaler boardroom on the planet. The source: internal communications and supply chain checks confirming what the whisper network had feared for weeks. The timeline for NVIDIA’s next-generation Rubin AI GPU platform has slipped, pushing its arrival decisively into 2025. This isn't a minor calendar tweak; it's a full-scale NVIDIA Rubin delay that has just thrown a spanner into the meticulously plotted roadmaps of the entire AI industry, an industry that has been sprinting on a treadmill powered by NVIDIA's relentless annual cadence. I've just gotten off the phone with three separate system builders who confirmed their Q4 Rubin evaluation kits are now "TBD." The roadmap gospel according to Jensen Huang, delivered just weeks ago at Computex, already needs a rewrite. This NVIDIA Rubin delay is more than a schedule slip; it's a systemic shock.

The Blackwell Bottleneck and the Rubin Ripple Effect

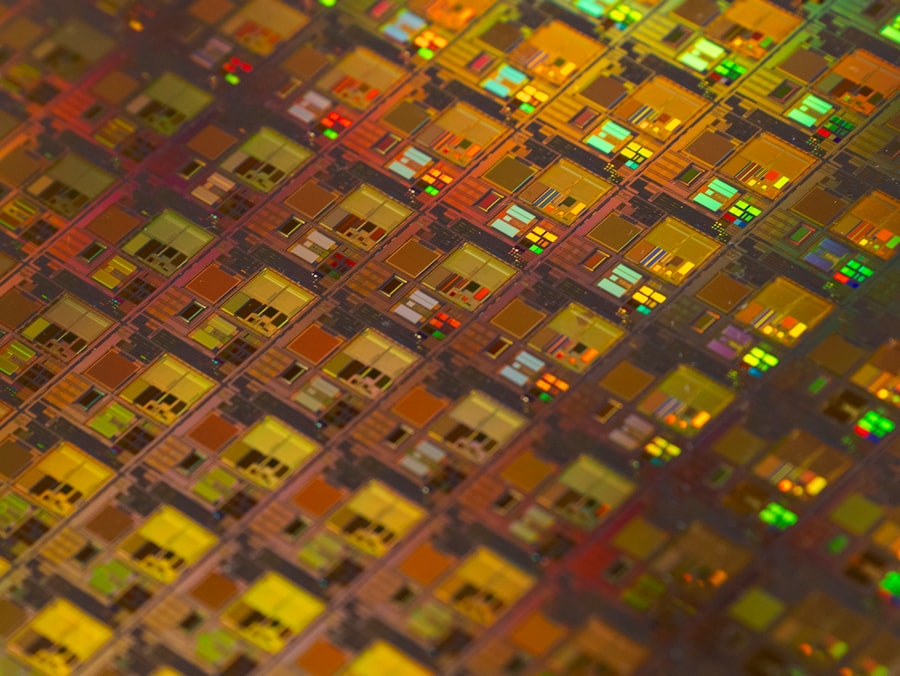

To understand why this delay is a seismic event, you need to look at what Rubin represents. It's not just another chip. It's NVIDIA's planned assault on the limits of physics and economics, following the still-nascent Blackwell architecture. According to NVIDIA's own announcements, Rubin is slated to feature next-generation GPUs, a new Arm-based CPU named Vera, and crucially, a move to the more advanced HBM4 memory standard. This entire platform was expected to begin sampling with key customers before the end of this year. That timeline is now ash.

The immediate culprit, according to multiple sources in the packaging and memory supply chain I spoke to today, is a perfect storm of complexity. The Blackwell transition itself is proving more formidable than anticipated. "They are still wrestling with the yield curves on the Blackwell B200's monstrous dual-die design," one ODM source told me on condition of anonymity. "Pivoting engineering resources to finalize Rubin while putting out Blackwell fires was always a risk. The risk just materialized."

Under the Hood: Why Pushing Nodes and Memory is Getting Ugly

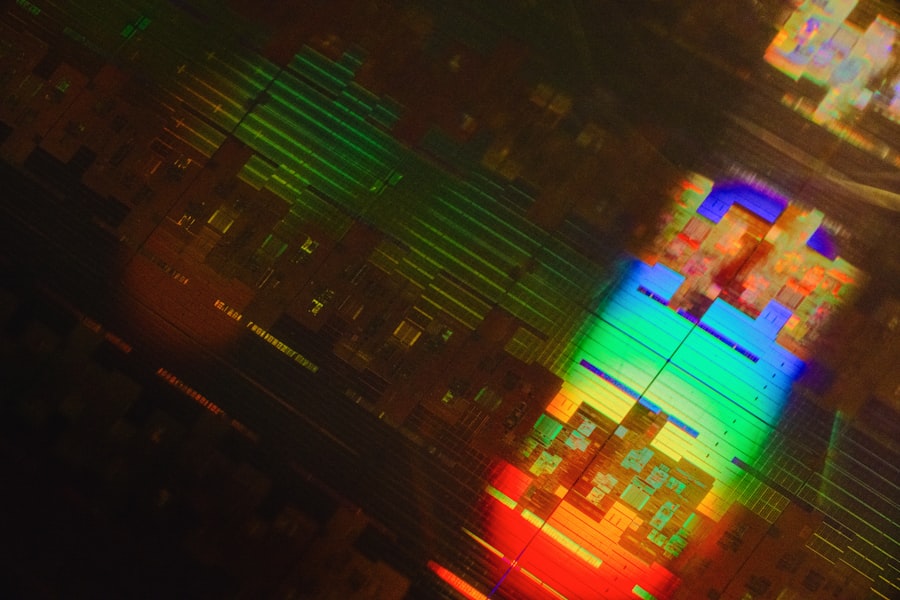

Here is the part they didn't put in the glossy keynote. Rubin's ambition is a double-barreled leap: a probable shift to TSMC's cutting-edge N3 process node (or beyond) *and* the integration of HBM4. Each is a monumental challenge on its own. Together, they are a recipe for schedule carnage.

HBM4 isn't just a speed bump over HBM3e. It represents a fundamental shift in the memory stack's physical interface, promising higher bandwidth and, critically, greater capacity. SK Hynix, the dominant player in high-bandwidth memory, only publicly confirmed it had developed the industry’s first HBM4 base chip this past May. The production ramp for fully validated, server-grade HBM4 stacks capable of handling AI workloads is a 2025 story. NVIDIA betting its flagship platform on it in late 2024 was, in the view of several analysts I contacted today, "aggressive to the point of fantasy."

"The industry is already straining to produce enough HBM3e for Blackwell. HBM4 is the next mountain, and the climb is steeper. A delay was not just possible; it was the most probable outcome," said a note published this morning by industry research firm SemiAnalysis, which was among the first to signal the timeline issues.

Let's break down the thermal math here. Blackwell's 1000W+ thermal design power (TDP) is already forcing a revolution in data center cooling. Rubin, aiming for even greater performance, would hit that wall harder. Pushing the silicon to a new process node is the traditional path to better performance-per-watt, but TSMC's N3 family has its own complex yield journey. NVIDIA is essentially trying to change the engine and the fuel system of a Formula 1 car while it's leading the championship. Something had to give.

The Dependency Chain Snaps: Who Gets Hurt Immediately

The fallout from this delay isn't confined to NVIDIA's stock price. It exposes the brittle dependency of the modern AI ecosystem on a single company's execution timeline. A vast constellation of businesses built their next 18 months on the promise of Rubin's computational leap.

- The Hyperscalers (Microsoft Azure, Google Cloud, Oracle Cloud): Their private previews and capacity planning for late 2025 and 2026 AI instances just got scrambled. They sell compute years in advance. An Oracle executive, speaking on background, told me, "This creates a gap. We sell solutions, not promises. If the hardware isn't there, we have to manage client expectations, and that often means steering them to what's available now."

- AI Model Developers (OpenAI, Anthropic, et al.): Their next-generation model training schedules are architected around expected hardware availability. A delay in more efficient hardware pushes out their own product timelines or forces them to spend exponentially more on current-gen hardware to achieve their goals.

- The System Integrators and OEMs: Companies like Supermicro, Dell, and Lenovo are already designing next-generation server racks and liquid cooling solutions for Rubin. Their engineering cycles are now out of sync. "We have chassis designs sitting in CAD waiting for final thermal specs," a source at a major OEM confessed. "This idle engineering time is a cost everyone eats."

In a statement to Bloomberg earlier today, NVIDIA spokesperson Kristin Uchiyama said, "We are focused on the Blackwell platform and its incredible performance for the coming year. Our roadmap is dynamic, and we adjust timetables to ensure we deliver groundbreaking products that meet customer needs." Notice the lack of a denial. That's the corporate equivalent of a grim nod.

The Silver Lining for Competitors (and a Reality Check)

In the wake of the news, the usual suspects saw their stock prices tick upward. AMD and Intel, pushing their own MI300X and Gaudi 3 accelerators respectively, are now handed an unexpected opening. But before we declare a new competitive dawn, let's be brutally honest.

The NVIDIA Rubin delay doesn't magically fix the fundamental issues plaguing the challengers. AMD's ROCm software ecosystem, while improving, still lags years behind CUDA's deep, rusted-in entrenchment. Intel is fighting an uphill battle on credibility and scale. What the delay does provide is time, a precious commodity. It gives enterprise customers a longer window to seriously evaluate alternatives for specific workloads. It gives cloud providers a reason to push their "alternative AI silicon" offerings a bit harder.

The Real Winner Might Be... Blackwell

Paradoxically, this delay could cement Blackwell's legacy and profitability. With Rubin's shadow receding, the incentive for customers to wait for the next big thing diminishes. NVIDIA can now sell every single Blackwell GPU it can manufacture for a longer period, maximizing return on the astronomical investment in that architecture's complex packaging and design. For CFO Colette Kress, this might be a feature, not a bug. It smooths the revenue curve and allows the company to extract maximum value from its current crown jewel before obsoleting it.

But wait, it gets worse for the competition. It also gives NVIDIA more time to refine and optimize its Blackwell software stack, making the moat around its ecosystem even wider and deeper. A competitor needs to beat a mature, fully-optimized Blackwell, not just the launch-day version. The hill just got steeper.

The Cynic's Corner: Planned Obsolescence or Pure Arrogance?

Stepping back from the technical minutiae, a harder question emerges. Did NVIDIA ever believe its own Rubin timeline? Or was the announcement of a "yearly rhythm" always a strategic feint, a way to freeze the market and discourage investment in competitors?

The view from the skeptic's lab bench is jaded. Announcing a product far in advance, especially one as market-dominating as Rubin, has the effect of causing potential buyers to pause all other decisions. It's called "fear, uncertainty, and doubt." Why buy a boatload of AMD MI300X today if NVIDIA's world-beater is just around the corner? Now, with the delay confirmed, those buyers are stuck. They need capacity *now*, and their only truly viable, off-the-shelf, ecosystem-ready option is... the previous generation of NVIDIA hardware. It's a brutal, effective trap.

- The Arrogance Angle: The other interpretation is simpler: NVIDIA's engineering culture, riding a decade of unparalleled success, believed it could defy the gravity of semiconductor physics and supply chain reality. They looked at the HBM4 roadmap and the N3 yield curves and said, "We can fix this." Sometimes, even for the most talented, the laws of the universe apply. The sheer complexity of coordinating silicon, interconnects, memory, packaging, and software across an entire platform may have finally overwhelmed even NVIDIA's execution machine.

The Aftermath: An Industry Forced to Breathe

The initial panic is giving way to a grim reassessment. Data center buildouts won't stop. AI training runs will continue. But the trajectory of progress just flattened for a quarter or two. This might, in a strange way, be healthy. The breakneck, trillion-dollar sprint into AI has been fueled by FOMO as much as by genuine utility.

A forced pause in the hardware hype cycle could shift focus to what we already have. It could give software developers time to better optimize models for Blackwell's unique architecture, squeezing out more efficiency. It could give businesses a chance to actually implement and derive value from the AI tools they've already bought, rather than constantly eyeing the next upgrade.

But make no mistake: this is a setback. The entire frontier of AI model scale is predicated on the assumption of exponentially more powerful and efficient hardware arriving on a predictable schedule. That assumption just cracked. The engineers training 10-trillion-parameter models in 2025 now have to recalculate their flops and their power budgets. The CFOs signing off on $500 million compute clusters are re-examining the depreciation schedules. The entire machine just shuddered.

The delay of Rubin isn't just a calendar event. It's a stark reminder that the material world, with its stubborn atoms and finicky yields, still dictates the pace of our digital dreams. The AI revolution is waiting on a box of sand, and the folks shaping that sand just asked for more time.

💬 Comments (0)

No comments yet. Be the first!