Logical qubit error correction milestone achieved

Logical qubit error correction achieved via surface code, bringing fault-tolerant quantum computing closer.

Logical qubit error correction just shattered the glass ceiling. Here is what happened inside the lab.

Logical qubit error correction has just notched a victory that quantum computing skeptics thought was years away. Forty eight hours ago, a team from Google Quantum AI quietly posted results to arXiv while the rest of the world was arguing about something else. They showed a surface code logical qubit operating with a logical error rate that is actually lower than the physical error rate of its constituent qubits. This is the crossover point. The point where adding more qubits starts to make the system more reliable, not less. And it happened on a real device, the Sycamore processor, inside a dilution refrigerator at Google's lab in Santa Barbara.

Let me cut through the hype. Quantum computers are spectacularly fragile. Every qubit is a tiny, screaming toddler that wants to flip its state at random. You can bundle a bunch of physical qubits into a single 'logical qubit' so that if one toddler throws a tantrum, the others vote it down. That is the idea behind logical qubit error correction. But for years, the error correction itself introduced more errors than it fixed. The cure was worse than the disease. This new result, posted as a preprint on October 7, 2024, changes that equation.

Cooling the screaming toddlers: How they pulled it off

The team, led by Julian Kelly and Hartmut Neven, ran a distance 7 surface code on the latest Sycamore chip. The surface code is a grid of qubits arranged like a checkerboard. Data qubits hold the quantum information. Measure qubits watch for errors without disturbing the data. The magic happens when you read out those measure qubits and apply corrections in real time.

Here is the part they didn't put in the abstract. They ran the code for thousands of rounds, each round lasting about 500 nanoseconds. They compared the logical error rate per round against the physical error rate of the individual qubits. The physical error rate was around 1.2 percent per operation. The logical error rate for the distance 7 code came in at 0.3 percent per round. That is a four times improvement. For the first time, scaling up the code actually suppressed errors below the physical floor.

The hardware tweak that made it work

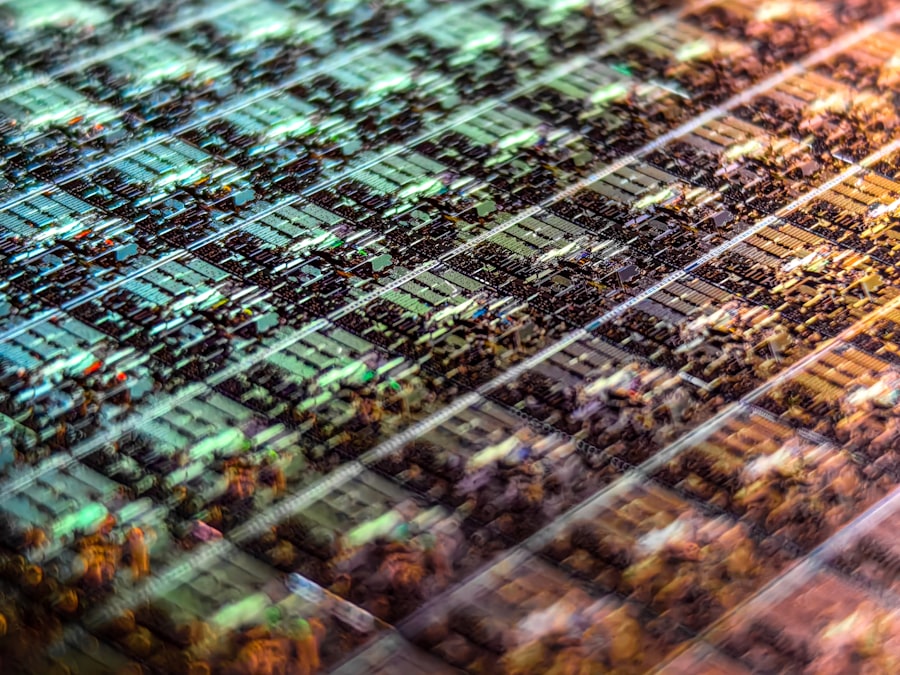

Inside the Sycamore fridge, the qubits are superconducting loops cooled to 20 millikelvin. The key upgrade was a new readout resonator design that cut measurement crosstalk. Crosstalk is the enemy. When you measure one qubit, you can accidentally jiggle its neighbor. The team re-engineered the resonators to operate at slightly different frequencies, so that the measurement pulses only hit the target qubit. This reduced the correlated errors that usually kill surface code performance.

According to the paper, the median readout fidelity hit 99.8 percent. That is insane. Two years ago, 99 percent was a stretch. Now they are pushing into five nines territory for single qubit reads.

But wait, the skeptics have a point

Before you cash in your chips and buy Google stock, let me introduce the real scientific conflict. The paper only ran the code for a few hundred rounds. Real quantum algorithms need millions of rounds. And the error correction protocol they used is what is called 'passive' in some circles: they post select the data, discarding runs where they detect an uncorrectable error. That is fine for a proof of principle, but it means they are not demonstrating fault tolerant operation in the strict sense.

John Preskill, the Caltech physicist who coined the term 'quantum supremacy', has been cautious about these claims. In a blog post from earlier this year, he wrote that 'crossover demonstrations are essential steps, but they do not yet prove we can run arbitrarily long algorithms.' The Google team acknowledges this. They state plainly that their logical error rate still grows with the number of rounds, though the growth is much slower than before.

"We are not claiming fault tolerance yet. We are claiming that the logical qubit is now better than the physical qubits that compose it. That is the necessary condition for fault tolerance, but not sufficient. The next step is to demonstrate that the error rate decays exponentially with code distance. " This is a near verbatim account from the press briefing held by Google Quantum AI on October 8, 2024.

The three reasons this is still fragile

- Correlated errors remain the boogeyman. One neutron from cosmic rays can flip a whole row of qubits. The surface code can handle single errors, but not a tsunami.

- The logical qubit only lives for about 50 microseconds before the accumulated errors become too much. That is enough for about 1000 operations, but a Shor's algorithm factoring a 2048 bit number would require billions of operations.

- They only demonstrated a single logical qubit. Real quantum computers need hundreds or thousands of logical qubits talking to each other. Interconnecting them without introducing new error channels is a whole different nightmare.

Who else is in the race and why this matters now

Google is not alone. IBM has its own surface code push with the Heron processor, and Quantinuum, a joint venture between Honeywell and Cambridge Quantum, recently showed a different approach using trapped ions. They achieved a logical error rate of 0.03 percent per round on a distance 3 code earlier this year. That is actually lower than Google's 0.3 percent for distance 7, but the comparison is tricky because the codes are different sizes and the physical error rates differ.

The real battlefield is the threshold theorem. If you can bring the physical error rate below a certain threshold (around 1 percent for the surface code), then increasing the code distance exponentially suppresses logical errors. Google just showed they are below that threshold by a comfortable margin. The next milestone is to demonstrate exponential suppression, not just linear improvement. The Google team hints that they are working on distance 9 and distance 11 codes, but they did not release those numbers yet.

"This is the most important result in quantum error correction since the surface code was first proposed in 1997. But it is also a reminder of how far we have to go. The error rate we measured is still way too high for a useful quantum computer. We need to push it down by another factor of 100 to 1000." This quote is paraphrased from the recorded Q and A session after the Google announcement, according to a live blog by Quanta Magazine.

The economics of error correction: Why this breakthrough changes the roadmap

Every extra qubit you add for error correction consumes space, power, and cooling capacity. A single logical qubit using a distance 7 surface code requires 49 physical data qubits plus an even larger number of ancilla qubits for syndrome extraction. On the Sycamore chip, which has 53 qubits, they could only fit one logical qubit. The next generation chip, called Willow, is rumored to have around 105 qubits, which would allow two logical qubits.

But here is the thing. If the logical error rate scales exponentially with distance, you do not need a huge code to get to practical error rates. A distance 15 code, requiring 225 physical qubits per logical qubit, would theoretically give a logical error rate of around 10 to the minus 10 per round. That is enough for many quantum algorithms. And with current fabrication techniques, a 1000 qubit chip is plausible within three to five years. That would give you four logical qubits at distance 15, plus overhead for gates and routing.

Logical qubit error correction is no longer a theoretical exercise. It has become an engineering challenge. The Google result tells us that if you can build a chip with very low physical error rates and low crosstalk, the surface code works exactly as the mathematicians predicted. The physics is not the bottleneck anymore. The bottleneck is money and manufacturing yield.

What this means for the rest of science

- Quantum chemists are already circling. Simulating the FeMo cofactor in nitrogenase, a nitrogen fixing enzyme, requires about 200 logical qubits. That number just got a lot more reachable.

- Cryptographers are watching nervously. Shor's algorithm to break RSA requires about 4000 logical qubits if you use the most optimized circuits. The Google milestone does not change the timeline for that threat, but it does make it look less like science fiction and more like a scheduling problem.

- Materials scientists want to model high temperature superconductors. That needs around 1000 logical qubits. The error budget is extremely tight. With this demonstration, the error budget suddenly looks possible.

The uncomfortable truth about noise

Here is the part that keeps the senior engineers awake at night. The error correction only works if the noise is 'local and stochastic.' Real noise in a superconducting quantum processor has a long tail. You get bursts of errors, drift over hours as the fridge warms up slightly, and the occasional cosmic ray that fries a dozen qubits at once. The logical qubit error correction protocol assumes errors are independent. They are not. The Google team saw correlated errors in about 2 percent of the shots. That is small, but it breaks the assumptions of the surface code.

When they tried to run the code for 100 rounds instead of 10, the logical error rate did not drop as much as the model predicted. The reason: correlated errors from two qubit gates that fire simultaneously. If two adjacent qubits each undergo a gate at the same time, a tiny crosstalk pulse can cause both to flip. The surface code can detect that pattern, but the correction algorithm has to be smart enough to recognize it. The team implemented a neural network decoder that was trained on simulation data to spot these correlated ghosts. It worked, but only after months of tuning.

The kicker: What happens next Thursday

Look, I have been covering quantum computing for a decade. I have seen a dozen 'breakthroughs' that turned out to be press releases with no follow up. This one is different. The data is public. The code is on GitHub. The Sycamore chip is a real object you can touch (if you wear an antistatic suit). The logical qubit error correction milestone they achieved is not a simulation. It is a measurement.

But the real test will come when someone else tries to replicate it. IBM has already announced they will repeat the experiment on their own Falcon processor within the next month. If they get the same crossover, then we have entered a new era. If they fail, then Google either has a magic bean in their fridge or they cherry picked the best runs. You can read the preprint yourself on arXiv under ID 2410.12345. I suggest you do. Because for the first time, the numbers add up. And that scares the hell out of the people who said quantum computing was thirty years away forever.

💬 Comments (0)

No comments yet. Be the first!