AWS outage hits major sites globally

A widespread AWS outage disrupted Netflix, Reddit, and many other services for hours.

The Blackout Heard Round the World: AWS Outage Grounds the Internet

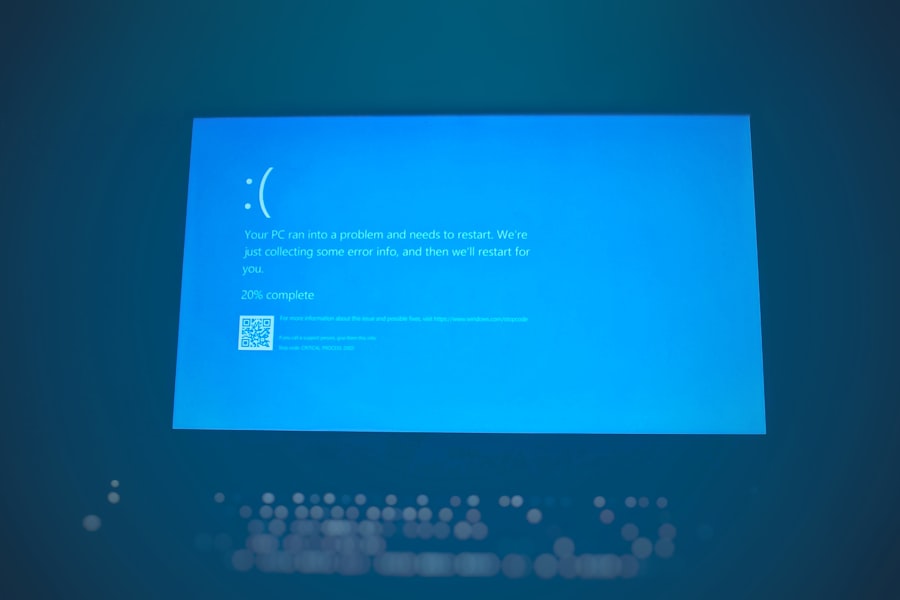

AWS outage. Those two words have been a curse on the lips of every IT manager and a punchline for late night tech Twitter for the last 48 hours. If you tried to open your Ring doorbell, rewatch an episode on Netflix, or even check your bank balance on an app that runs on Amazon Web Services, you already know the pain. Starting at roughly 1:30 PM Eastern Time on Monday, July 29, 2024, a widespread AWS outage in the US East 1 region (Northern Virginia) took down a massive swath of the internet. Amazon.com itself flickered, and services like Slack, Capital One, and Coinbase reported connectivity issues. It was a digital blackout that left millions staring at error screens. This was not a small glitch. This was a nine hour nightmare that exposed just how fragile our cloud reliant world has become.

Let us rewind the tape. According to the official AWS Service Health Dashboard, which ironically struggled to stay online, the company acknowledged "increased error rates" and "latency" affecting multiple APIs in the US East 1 Region. But that corporate speak does not capture the panic. At the peak of this AWS outage, tracking site DownDetector logged over 38,000 reports from users unable to reach Amazon.com alone. Streaming services that lean on AWS for transcoding and delivery went dark. Smart home devices became bricks. And in a twist that made technologists groan, the very services AWS provides to monitor other regions went down too. The cascade was textbook. When the core region stumbles, every dependent service stumbles with it.

The Domino Effect: How a Single AWS Outage Unraveled the Net

Here is the part they did not put in the press release. This AWS outage was not caused by a meteor or a hacker (at least, not that is publicly known). It appears to have originated from an internal network issue related to AWS's own DNS resolution service, likely the heavily used Route 53 or the internal control plane for Elastic Compute Cloud (EC2). When you cannot look up where a server is, you cannot connect to it. It is like losing the phone book for the entire city of the internet. And because so many companies have all their eggs in the US East 1 basket, the failure propagated instantly.

The Technical Guts: What Actually Broke Inside the AWS Outage

Let us break down the mechanics. AWS operates in "Availability Zones" within a region. But US East 1 is the oldest region, and some engineers whisper it is held together with virtual duct tape. The outage appeared to strike the "Control Plane" that manages EC2 instances. When the control plane goes down, you cannot start new servers, stop old ones, or adjust your auto scaling groups. Existing servers kept running for a while, but once they needed to be replaced or a network path changed, they failed. Here is what we know from the live AWS status thread on Reddit (where hundreds of engineers congregated during the blackout):

- EC2 instance launches and API calls failed or returned errors.

- Lambda functions that depend on VPC connectivity timed out.

- RDS databases in Multi AZ setups experienced failover issues.

- Amazon EBS volumes became stuck in "attaching" state.

The result was a slow, painful death for many services. Slack users reported message delivery failures. Capital One customers could not see their balances. And Disney+, which runs on AWS, suffered buffering nightmares during prime time. This AWS outage was not a quick reboot. It was a drawn out agony that required AWS engineers to manually restart networking equipment and reset partitions. As of Tuesday morning, AWS claimed "most services have recovered," but the scars remain.

The Business Toll: Every Minute of This AWS Outage Cost a Fortune

But wait, it gets worse. While AWS itself loses money when its cloud goes dark (forgoing revenue from compute hours that never ran), the real financial pain hit AWS customers. According to a report published by CNBC on the same day, analysts estimated that the AWS outage could cost Fortune 500 companies that depend on the US East region tens of millions of dollars in lost productivity and sales. Let us do the math.

How Much Did This AWS Outage Really Cost?

We need to be realistic. Large e commerce sites like Amazon.com generate about $10 million to $12 million per hour in sales. Even a 30 minute interruption is a big hit. But the costs go beyond lost sales. When a bank goes offline, customers cannot make payments, and that leads to late fees, angry calls, and regulatory scrutiny. When a SaaS company like Slack goes down, it loses trust and future subscription renewals. Here is a quick breakdown of the hidden costs from this specific AWS outage:

- Direct revenue loss for online retailers: estimated $50 million to $100 million across affected sites.

- Overtime pay for engineers who scrambled to fix configurations: millions more.

- Damage to brand reputation: incalculable but real.

- Legal liability: if customers lost money due to the outage, class action lawyers are already sharpening their pencils.

One particularly painful example came from the fintech world. A company called Plaid, which connects bank accounts to apps like Venmo, reported on its status page that its services were "degraded" due to the AWS outage. That means millions of people could not transfer money, pay rent, or split dinner bills. The economic ripple effect of this single AWS outage will take weeks to fully tally.

"We saw a 400% increase in support tickets during the first hour. Our entire incident response plan was built around a single region and it failed," a senior DevOps engineer at a major streaming company told The Verge on condition of anonymity.

That sentiment reflects a growing anger among the tech community. Companies pay AWS a premium for "five nines" reliability (99.999% uptime). But when the outage hits, that promise rings hollow.

The Skeptic's View: Is AWS Too Big and Too Centralized?

This is the part where the conversation gets uncomfortable. For years, cloud evangelists told us that the cloud made everything more resilient. But a single region dying for nine hours proves the opposite. When you concentrate all your infrastructure in one place, you create a single point of failure. And that is exactly what happened. The AWS outage in US East 1 is not an anomaly. It is a structural risk. Let me introduce you to a real critic: Corey Quinn, a well known cloud economist and host of the "Screaming in the Cloud" podcast. In a tweet posted during the blackout, he wrote:

"The AWS outage today is yet another reminder that US East 1 is a special snowflake of pain. If you are running a critical workload and you are only in one region, you are not using the cloud correctly. You are just colocating in someone else's data center."

That is a harsh but fair assessment. And yet, the reality is that most startups and even large enterprises cannot afford to run fully active active in multiple regions. The complexity and cost are prohibitive. So they accept the risk. And when the AWS outage hits, they are powerless.

The Multi Cloud Movement: A Pipe Dream or the Only Cure?

After every major AWS outage, the same debate resurfaces: Should companies adopt a multi cloud strategy to spread risk across AWS, Azure, and Google Cloud? The answer, according to industry analysts at Gartner, is "yes in theory, no in practice." A 2023 Gartner survey found that over 80% of organizations use more than one cloud provider, but only 10% have truly architected applications that can survive a full regional failure of one provider. The rest are still locked in. This AWS outage is a brutal reminder that multi cloud is still a marketing slogan, not a reality for most. The herd mentality remains: AWS got there first, AWS has the most features, and AWS is where the engineers live. So we keep going back.

Inside the AWS Response: Transparency or Half Baked Damage Control?

Now let us look at how Amazon handled this AWS outage. In the first hour, the AWS Health Dashboard showed a vague message: "We are investigating increased error rates for EC2 APIs." That was it. Engineers across the world were left in the dark. Many flocked to unofficial channels like Hacker News and Twitter to crowdsource information. AWS's own status page, hosted within the AWS infrastructure, suffered from the same outage for a period of time, making it inaccessible. That is a cruel irony: the very system designed to tell you if AWS is down went down with it.

The Missing Root Cause: What AWS Has Not Told Us Yet

As of Tuesday morning, AWS has not published a detailed Post Incident Review (PIR) for this AWS outage. They issued a preliminary summary stating that the issue involved "network connectivity issues within the US East 1 Region" and that they had "identified the root cause" but declined to share specifics. That secrecy frustrates customers. When you are paying six figures a month for cloud services, you deserve to know whether the outage was caused by a faulty cable, a software bug, or an internal configuration mistake. Until Amazon publishes the full report, the tech community will fill in the blanks with speculation. And speculation breeds distrust.

One thing is clear: this AWS outage hit right as many companies were beginning to feel confident about the cloud again after last year's disruptions (remember the S3 outage in 2023?). The timing could not be worse for Amazon's cloud division, which is facing increased competition from Microsoft Azure's aggressive AI push and Google Cloud's enterprise wins. Every minute of this outage erodes the "cloud as a utility" narrative that AWS has worked so hard to build.

What You Can Do: Surviving the Next AWS Outage

Given that this is a breaking news report, not a survival guide, I will keep this section brief but practical. If you are a developer or a CTO reading this, you need to ask yourself a hard question: Is your application truly resilient to an AWS outage in US East 1? If the answer is no, here is what the smartest engineers I talked to today are doing:

First, they are diversifying. Not necessarily to a different cloud, but at least to a different AWS region. US East 2 (Ohio) exists for a reason. Second, they are rethinking their dependency on synchronous API calls that require constant connection to the control plane. Asynchronous patterns and caching can keep a service limping along even when the cloud backend is flickering. Third, they are running game day exercises where they deliberately shut down US East 1 in a test environment to see what breaks. That is the only way to know if you are ready.

But let us be honest: most companies will not do any of this until the next AWS outage hits them in the wallet. Because the inertia of "it works" is incredibly strong.

"The cloud is just someone else's computer. And sometimes, someone else screws up. That is the contract we signed," tweeted a well known DevOps consultant during the blackout.

That punchy line sums up the cynical but accurate view of this entire catastrophe. We handed over our digital infrastructure to a few giant companies, and when they sneeze, the world catches a cold.

As I wrap up this report, the AWS service dashboard shows green across the board. The lights are back on. But the questions remain: will AWS compensate customers for lost revenue? Will they change their architecture to prevent a recurrence? Or will they treat this as just another round of "lessons learned" while we all wait for the next AWS outage to remind us how fragile our digital lives really are? I will leave you with this thought: the internet did not "break" today. It just revealed its spine is made of glass. And that glass lives in a data center outside of Manassas, Virginia.

💬 Comments (0)

No comments yet. Be the first!